Class 6: Advanced pandas¶

Currently, pandas’ Series and DataFrame might seem to us as no more than tables with complicated indexing methods. In this lesson, we will learn more about what makes pandas so powerful and how we can use it to write efficient and readable code.

Note

Some of the features described below only work with pandas >= 1.0.0. Make sure you have the latest pandas installation when running this notebook. To check the version of your pandas (or any other package), import it and print its __version__ attribute:

>>> import pandas as pd

>>> print(pd.__version__)

'1.2.0'

Missing Data¶

The last question in the previous class pointed us to working with missing data. But how and why do missing data occur?

One option is pandas’ index alignment, the property that makes sure that each value will have the same index throughout the entire computation process.

import pandas as pd

import numpy as np

A = pd.Series([2, 4, 6], index=[0, 1, 2])

B = pd.Series([1, 3, 5], index=[1, 2, 3])

A + B

0 NaN

1 5.0

2 9.0

3 NaN

dtype: float64

The NaNs we have are what we call missing data, and this is how they are represented in pandas. We’ll discuss that in more detail in a few moments.

The same thing occurs with DataFrames:

A = pd.DataFrame(np.random.randint(0, 20, (2, 2)),

columns=list('AB'))

A

| A | B | |

|---|---|---|

| 0 | 7 | 7 |

| 1 | 4 | 13 |

B = pd.DataFrame(np.random.randint(0, 10, (3, 3)),

columns=list('BAC'))

B

| B | A | C | |

|---|---|---|---|

| 0 | 1 | 1 | 8 |

| 1 | 9 | 0 | 6 |

| 2 | 9 | 0 | 9 |

new = A + B

print(new)

print(f"\nReturned dtypes:\n{new.dtypes}")

A B C

0 8.0 8.0 NaN

1 4.0 22.0 NaN

2 NaN NaN NaN

Returned dtypes:

A float64

B float64

C float64

dtype: object

Note

Note how new.dtypes itself returns a Series of dtypes, with it’s own object dtype.

The dataframe’s shape is the shape of the larger dataframe, and the “extra” row (index 2) was filled with NaNs. Since we have NaNs, the data type of the column is implicitly converted to a floating point type. To have integer dataframes with NaNs, we have to explicitly say we want them available. More on that later.

Another way to introduce missing data is through reindexing. If we “resample” our data we can achieve the following:

df = pd.DataFrame(np.random.randn(5, 3), index=['a', 'c', 'e', 'f', 'h'],

columns=['one', 'two', 'three'])

df

| one | two | three | |

|---|---|---|---|

| a | 0.356463 | -0.971846 | -0.194065 |

| c | -0.332752 | -0.365750 | -0.567006 |

| e | 0.350708 | 0.559431 | -0.283920 |

| f | -0.432535 | 0.315426 | 1.040877 |

| h | 0.416175 | 0.522696 | -1.776364 |

df2 = df.reindex(['a', 'b', 'c', 'd', 'e', 'f', 'g', 'h'])

df2

| one | two | three | |

|---|---|---|---|

| a | 0.356463 | -0.971846 | -0.194065 |

| b | NaN | NaN | NaN |

| c | -0.332752 | -0.365750 | -0.567006 |

| d | NaN | NaN | NaN |

| e | 0.350708 | 0.559431 | -0.283920 |

| f | -0.432535 | 0.315426 | 1.040877 |

| g | NaN | NaN | NaN |

| h | 0.416175 | 0.522696 | -1.776364 |

But what is NaN? Is it the same as None? To better answer the former, let’s first have a closer look at the latter.

The None object¶

None is the standard null value in Python, and is used extensively in normal usage of the language. For example, functions that don’t have a return statement, implicitly return None. While None can be used as a missing data type, it’s probably not the best choice.

vals1 = np.array([1, None, 3, 4])

vals1

array([1, None, 3, 4], dtype=object)

The dtype is object, because the best common type of ints and a None is a Python object. This slows down computation time on these arrays:

for dtype in ['object', 'int']:

print("dtype =", dtype)

%timeit np.arange(1E6, dtype=dtype).sum()

print()

dtype = object

83.6 ms ± 351 µs per loop (mean ± std. dev. of 7 runs, 10 loops each)

dtype = int

1.48 ms ± 8.93 µs per loop (mean ± std. dev. of 7 runs, 1000 loops each)

If you recall from a couple of lessons ago, the performance of object arrays is very similar to that of standard lists (generally speaking, the two data structures are effectively identical).

Another thing we can’t do is aggregation:

vals1.sum()

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-9-30a3fc8c6726> in <module>

----> 1 vals1.sum()

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/numpy/core/_methods.py in _sum(a, axis, dtype, out, keepdims, initial, where)

45 def _sum(a, axis=None, dtype=None, out=None, keepdims=False,

46 initial=_NoValue, where=True):

---> 47 return umr_sum(a, axis, dtype, out, keepdims, initial, where)

48

49 def _prod(a, axis=None, dtype=None, out=None, keepdims=False,

TypeError: unsupported operand type(s) for +: 'int' and 'NoneType'

The NaN value¶

NaN is a special floating-point value recognized by all programming languages that conform to the IEEE standard (which means most of them). As we mentioned before, it forces the entire array to have a floating point type:

vals2 = np.array([1, np.nan, 3, 4])

vals2.dtype

dtype('float64')

Creating floating point arrays is very fast, so performance isn’t hindered. NaN is sometimes described as a “data virus”, since it infects objects it touches:

1 + np.nan

nan

0 * np.nan

nan

vals2.sum(), vals2.min(), vals2.max()

(nan, nan, nan)

np.nan == np.nan

False

Numpy has nan-aware counterparts to many of its aggregation functions, which can work with NaNs correctly. They usually have the same name as their non-NaN sibling, but with the “nan” prefix:

print(np.nansum(vals2))

print(np.nanmean(vals2))

8.0

2.6666666666666665

However, pandas objects account for NaNs in their calculations, as we’ll soon see.

Pandas can handle both NaN and None interchangeably:

ser = pd.Series([1, np.nan, 2, None])

ser

0 1.0

1 NaN

2 2.0

3 NaN

dtype: float64

The NaT value¶

When dealing with datetime values or indices, the missing value is represented as NaT, or not-a-time:

df['timestamp'] = pd.Timestamp('20180101')

df

| one | two | three | timestamp | |

|---|---|---|---|---|

| a | 0.356463 | -0.971846 | -0.194065 | 2018-01-01 |

| c | -0.332752 | -0.365750 | -0.567006 | 2018-01-01 |

| e | 0.350708 | 0.559431 | -0.283920 | 2018-01-01 |

| f | -0.432535 | 0.315426 | 1.040877 | 2018-01-01 |

| h | 0.416175 | 0.522696 | -1.776364 | 2018-01-01 |

df2 = df.reindex(['a', 'b', 'c', 'd', 'e', 'f', 'g', 'h'])

df2

| one | two | three | timestamp | |

|---|---|---|---|---|

| a | 0.356463 | -0.971846 | -0.194065 | 2018-01-01 |

| b | NaN | NaN | NaN | NaT |

| c | -0.332752 | -0.365750 | -0.567006 | 2018-01-01 |

| d | NaN | NaN | NaN | NaT |

| e | 0.350708 | 0.559431 | -0.283920 | 2018-01-01 |

| f | -0.432535 | 0.315426 | 1.040877 | 2018-01-01 |

| g | NaN | NaN | NaN | NaT |

| h | 0.416175 | 0.522696 | -1.776364 | 2018-01-01 |

Operations and calculations with missing data¶

a = pd.DataFrame(np.random.random((5, 2)), columns=['one', 'two'])

a.iloc[1, 1] = np.nan

a

| one | two | |

|---|---|---|

| 0 | 0.855852 | 0.941375 |

| 1 | 0.220176 | NaN |

| 2 | 0.177161 | 0.666123 |

| 3 | 0.463456 | 0.615748 |

| 4 | 0.635144 | 0.913012 |

b = pd.DataFrame(np.random.random((6, 3)), columns=['one', 'two', 'three'])

b.iloc[2, 2] = np.nan

b

| one | two | three | |

|---|---|---|---|

| 0 | 0.748925 | 0.779651 | 0.499827 |

| 1 | 0.734870 | 0.858141 | 0.085762 |

| 2 | 0.268609 | 0.540138 | NaN |

| 3 | 0.365859 | 0.578249 | 0.146134 |

| 4 | 0.103976 | 0.644787 | 0.268762 |

| 5 | 0.484668 | 0.852022 | 0.565581 |

a + b

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | NaN | 1.721027 |

| 1 | 0.955046 | NaN | NaN |

| 2 | 0.445770 | NaN | 1.206261 |

| 3 | 0.829315 | NaN | 1.193997 |

| 4 | 0.739120 | NaN | 1.557799 |

| 5 | NaN | NaN | NaN |

As we see, missing values propagate naturally through these arithmetic operations. Statistics also works:

(a + b).describe()

# Summation - NaNs are zero.

# If everything is NaN - the result is NaN as well.

# pandas' cumsum and cumprod ignore NaNs but preserve them in the resulting arrays.

| one | three | two | |

|---|---|---|---|

| count | 5.000000 | 0.0 | 4.000000 |

| mean | 0.914806 | NaN | 1.419771 |

| std | 0.428908 | NaN | 0.262277 |

| min | 0.445770 | NaN | 1.193997 |

| 25% | 0.739120 | NaN | 1.203195 |

| 50% | 0.829315 | NaN | 1.382030 |

| 75% | 0.955046 | NaN | 1.598606 |

| max | 1.604778 | NaN | 1.721027 |

We can also receive a boolean mask of the NaNs in a dataframe:

mask = (a + b).isnull() # also isna(), and the opposite .notnull()

mask

| one | three | two | |

|---|---|---|---|

| 0 | False | True | False |

| 1 | False | True | True |

| 2 | False | True | False |

| 3 | False | True | False |

| 4 | False | True | False |

| 5 | True | True | True |

Filling missing values¶

The simplest option is to use the fillna method:

summed = a + b

summed.iloc[4, 0] = np.nan

summed

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | NaN | 1.721027 |

| 1 | 0.955046 | NaN | NaN |

| 2 | 0.445770 | NaN | 1.206261 |

| 3 | 0.829315 | NaN | 1.193997 |

| 4 | NaN | NaN | 1.557799 |

| 5 | NaN | NaN | NaN |

summed.fillna(0)

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | 0.0 | 1.721027 |

| 1 | 0.955046 | 0.0 | 0.000000 |

| 2 | 0.445770 | 0.0 | 1.206261 |

| 3 | 0.829315 | 0.0 | 1.193997 |

| 4 | 0.000000 | 0.0 | 1.557799 |

| 5 | 0.000000 | 0.0 | 0.000000 |

summed.fillna('missing') # changed dtype to "object"

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | missing | 1.721027 |

| 1 | 0.955046 | missing | missing |

| 2 | 0.44577 | missing | 1.206261 |

| 3 | 0.829315 | missing | 1.193997 |

| 4 | missing | missing | 1.557799 |

| 5 | missing | missing | missing |

summed.fillna(method='pad') # The NaN column remained the same, but values were propagated forward

# We can also use the "backfill" method to fill in values to the back

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | NaN | 1.721027 |

| 1 | 0.955046 | NaN | 1.721027 |

| 2 | 0.445770 | NaN | 1.206261 |

| 3 | 0.829315 | NaN | 1.193997 |

| 4 | 0.829315 | NaN | 1.557799 |

| 5 | 0.829315 | NaN | 1.557799 |

summed.fillna(method='pad', limit=1) # No more than one padded NaN in a row

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | NaN | 1.721027 |

| 1 | 0.955046 | NaN | 1.721027 |

| 2 | 0.445770 | NaN | 1.206261 |

| 3 | 0.829315 | NaN | 1.193997 |

| 4 | 0.829315 | NaN | 1.557799 |

| 5 | NaN | NaN | 1.557799 |

summed.fillna(summed.mean()) # each column received its respective mean. The NaN column is untouched.

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | NaN | 1.721027 |

| 1 | 0.955046 | NaN | 1.419771 |

| 2 | 0.445770 | NaN | 1.206261 |

| 3 | 0.829315 | NaN | 1.193997 |

| 4 | 0.958727 | NaN | 1.557799 |

| 5 | 0.958727 | NaN | 1.419771 |

Dropping missing values¶

We’ve already seen in the short exercise the dropna method, that allows us to drop missing values:

summed

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | NaN | 1.721027 |

| 1 | 0.955046 | NaN | NaN |

| 2 | 0.445770 | NaN | 1.206261 |

| 3 | 0.829315 | NaN | 1.193997 |

| 4 | NaN | NaN | 1.557799 |

| 5 | NaN | NaN | NaN |

filled = summed.fillna(summed.mean())

filled

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | NaN | 1.721027 |

| 1 | 0.955046 | NaN | 1.419771 |

| 2 | 0.445770 | NaN | 1.206261 |

| 3 | 0.829315 | NaN | 1.193997 |

| 4 | 0.958727 | NaN | 1.557799 |

| 5 | 0.958727 | NaN | 1.419771 |

filled.dropna(axis=1) # each column containing NaN is dropped

| one | two | |

|---|---|---|

| 0 | 1.604778 | 1.721027 |

| 1 | 0.955046 | 1.419771 |

| 2 | 0.445770 | 1.206261 |

| 3 | 0.829315 | 1.193997 |

| 4 | 0.958727 | 1.557799 |

| 5 | 0.958727 | 1.419771 |

filled.dropna(axis=0) # each row containing a NaN is dropped

| one | three | two |

|---|

Interpolation¶

The last way to to fill in missing values is through interpolation.

The default interpolation methods perform linear interpolation on the data, based on its ordinal index:

summed

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | NaN | 1.721027 |

| 1 | 0.955046 | NaN | NaN |

| 2 | 0.445770 | NaN | 1.206261 |

| 3 | 0.829315 | NaN | 1.193997 |

| 4 | NaN | NaN | 1.557799 |

| 5 | NaN | NaN | NaN |

summed.interpolate() # notice all the details in the interpolation of the three columns

| one | three | two | |

|---|---|---|---|

| 0 | 1.604778 | NaN | 1.721027 |

| 1 | 0.955046 | NaN | 1.463644 |

| 2 | 0.445770 | NaN | 1.206261 |

| 3 | 0.829315 | NaN | 1.193997 |

| 4 | 0.829315 | NaN | 1.557799 |

| 5 | 0.829315 | NaN | 1.557799 |

We can also interpolate with the actual index values in mind:

# Create "missing" index

timeindex = pd.Series(['1/1/2018', '1/4/2018', '1/5/2018', '1/7/2018', '1/8/2018'])

timeindex = pd.to_datetime(timeindex)

data_to_interp = [1, np.nan, 5, np.nan, 8]

df_to_interp = pd.DataFrame(data_to_interp, index=timeindex)

df_to_interp

| 0 | |

|---|---|

| 2018-01-01 | 1.0 |

| 2018-01-04 | NaN |

| 2018-01-05 | 5.0 |

| 2018-01-07 | NaN |

| 2018-01-08 | 8.0 |

df_to_interp.interpolate() # the index values aren't taken into account

| 0 | |

|---|---|

| 2018-01-01 | 1.0 |

| 2018-01-04 | 3.0 |

| 2018-01-05 | 5.0 |

| 2018-01-07 | 6.5 |

| 2018-01-08 | 8.0 |

df_to_interp.interpolate(method='index') # notice how the data obtains the "right" values

| 0 | |

|---|---|

| 2018-01-01 | 1.0 |

| 2018-01-04 | 4.0 |

| 2018-01-05 | 5.0 |

| 2018-01-07 | 7.0 |

| 2018-01-08 | 8.0 |

Pandas has many other interpolation methods, based on SciPy’s.

df_inter_2 = pd.DataFrame({'A': [1, 2.1, np.nan, 4.7, 5.6, 6.8],

'B': [.25, np.nan, np.nan, 4, 12.2, 14.4]})

df_inter_2

| A | B | |

|---|---|---|

| 0 | 1.0 | 0.25 |

| 1 | 2.1 | NaN |

| 2 | NaN | NaN |

| 3 | 4.7 | 4.00 |

| 4 | 5.6 | 12.20 |

| 5 | 6.8 | 14.40 |

df_inter_2.interpolate(method='polynomial', order=2)

| A | B | |

|---|---|---|

| 0 | 1.000000 | 0.250000 |

| 1 | 2.100000 | -2.703846 |

| 2 | 3.451351 | -1.453846 |

| 3 | 4.700000 | 4.000000 |

| 4 | 5.600000 | 12.200000 |

| 5 | 6.800000 | 14.400000 |

Missing Values in Non-Float Columns¶

Starting from pandas v1.0.0 pandas gained support for NaN values in non-float columns. This feature is a bit experimental currently, so the default behavior still converts integers to floats for example, but the support is there if you know where to look. By default:

nanint = pd.Series([1, 2, np.nan, 4])

nanint # the result has a dtype of float64 even though all numbers are integers.

0 1.0

1 2.0

2 NaN

3 4.0

dtype: float64

We can try to force pandas’ hand here, but it won’t work:

nanint = pd.Series([1, 2, np.nan, 4], dtype="int32")

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-42-f1daddd69d17> in <module>

----> 1 nanint = pd.Series([1, 2, np.nan, 4], dtype="int32")

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/series.py in __init__(self, data, index, dtype, name, copy, fastpath)

362 data = data.copy()

363 else:

--> 364 data = sanitize_array(data, index, dtype, copy, raise_cast_failure=True)

365

366 data = SingleBlockManager.from_array(data, index)

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/construction.py in sanitize_array(data, index, dtype, copy, raise_cast_failure)

478

479 if dtype is not None:

--> 480 subarr = _try_cast(data, dtype, copy, raise_cast_failure)

481 else:

482 subarr = maybe_convert_platform(data)

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/construction.py in _try_cast(arr, dtype, copy, raise_cast_failure)

585 if is_integer_dtype(dtype):

586 # this will raise if we have e.g. floats

--> 587 maybe_cast_to_integer_array(arr, dtype)

588 subarr = arr

589 else:

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/dtypes/cast.py in maybe_cast_to_integer_array(arr, dtype, copy)

1721 try:

1722 if not hasattr(arr, "astype"):

-> 1723 casted = np.array(arr, dtype=dtype, copy=copy)

1724 else:

1725 casted = arr.astype(dtype, copy=copy)

ValueError: cannot convert float NaN to integer

To our rescue comes the new pd.Int32Dtype:

nanint = pd.Series([1, 2, np.nan, 4], dtype="Int32")

nanint

0 1

1 2

2 <NA>

3 4

dtype: Int32

It worked! We have a series with integers and a missing value! Notice the changes we had to made:

The

NaNis<NA>now. It’s actually a new type ofNaNcalledpd.NA.The data type had to be mentioned explictly, meaning that the conversion will work only if we know in advance that we’ll have NA values.

The data type is

Int32. It’s CamelCase and it’s actually a class underneath. Standard datatypes are lowercase.

Caveats aside, this is definitely useful for scientists who sometimes have integer values and do not want to convert them to float to supports NAs.

Exercise: Missing Data

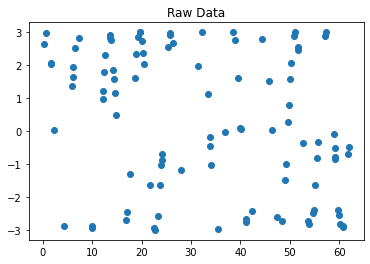

Create a vector of 10000 measurements from a 10-cycle sinus wave. Remember that a single period of sine starts at 0 and ends at 2\(\pi\), so 10 periods span between 0 and 20\(\pi\).

Solution

n_cycles = 10

n_samples = 10000

amplitude = 3

phase = np.pi / 4

end = 2 * np.pi * n_cycles

x = np.linspace(0, end, num=n_samples)

y = amplitude * np.sin(x + phase)

Using

np.random.choice(replace=False)sample 100 points from the wave and place them in a Series.

Solution

chosen_idx = np.random.choice(n_samples, size=100, replace=False)

data = pd.DataFrame(np.nan, index=x, columns=['raw'])

data.iloc[chosen_idx, 0] = y[chosen_idx]

Plot the chosen points.

Solution

fig1, ax1 = plt.subplots()

ax1.set_title('Raw data pre-interpolation')

data.raw.plot(marker='o', ax=ax1)

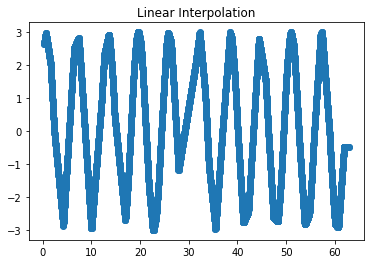

Interpolate the points using linear interpolation and plot them on a different graph.

Solution

data['lin_inter'] = data.raw.interpolate(method='index')

fig2, ax2 = plt.subplots()

ax2.set_title('Linear interpolation')

data.lin_inter.plot(marker='o', ax=ax2)

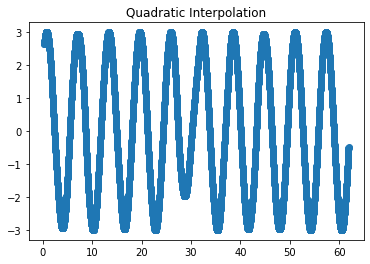

Interpolate the points using quadratic interpolation and plot them on a different graph.

Solution

data['quad_inter'] = data.raw.interpolate(method='quadratic')

fig3, ax3 = plt.subplots()

ax3.set_title('Quadratic interpolation')

data.quad_inter.plot(marker='o', ax=ax3)

Categorical Data¶

So far, we’ve used examples with quantitative data. Let’s now have a look at categorical data, i.e. data can only have one of a specific set, or categories, of values. For example, if we have a column which marks the weekday, then it can obviously only be one of seven options. Same for boolean data, colors, and other examples. These data columns should be marked as “categorical” to reduce memory consumption and improve performance. It also tells the code readers more about the nature of that data column.

The easiest way to create a categorical variable is to declare it as such, or to convert as existing column to a categorical data type:

s = pd.Series(["a", "b", "c", "a"], dtype="category")

s

0 a

1 b

2 c

3 a

dtype: category

Categories (3, object): ['a', 'b', 'c']

df = pd.DataFrame({"A": ["a", "b", "c", "a"]})

df["B"] = df["A"].astype("category")

print(f"DataFrame:\n{df}")

print(f"\nData types:\n{df.dtypes}")

DataFrame:

A B

0 a a

1 b b

2 c c

3 a a

Data types:

A object

B category

dtype: object

We can also force order between our categories, or force specific categories on our data, using the special CategoricalDtype (which we won’t show).

As we said, memory usage is reduced when working with categorical data:

df_obj = pd.DataFrame({'a': np.random.random(10_000), 'b': ['a'] * 10_000})

df_obj

| a | b | |

|---|---|---|

| 0 | 0.312997 | a |

| 1 | 0.198094 | a |

| 2 | 0.125348 | a |

| 3 | 0.441002 | a |

| 4 | 0.967082 | a |

| ... | ... | ... |

| 9995 | 0.994568 | a |

| 9996 | 0.090957 | a |

| 9997 | 0.394827 | a |

| 9998 | 0.122471 | a |

| 9999 | 0.156984 | a |

10000 rows × 2 columns

df_cat = pd.DataFrame({'a': df_obj['a'], 'b': df_obj['b'].astype('category')})

df_cat

| a | b | |

|---|---|---|

| 0 | 0.312997 | a |

| 1 | 0.198094 | a |

| 2 | 0.125348 | a |

| 3 | 0.441002 | a |

| 4 | 0.967082 | a |

| ... | ... | ... |

| 9995 | 0.994568 | a |

| 9996 | 0.090957 | a |

| 9997 | 0.394827 | a |

| 9998 | 0.122471 | a |

| 9999 | 0.156984 | a |

10000 rows × 2 columns

df_obj.memory_usage()

Index 128

a 80000

b 80000

dtype: int64

df_cat.memory_usage()

Index 128

a 80000

b 10116

dtype: int64

A factor of 8 in memory reduction.

Hierarchical Indexing¶

Last time we mentioned that while a DataFrame is inherently a 2D object, it can contain multi-dimensional data. The way a DataFrame (and a Series) does that is with hierarchical indexing, or sometimes Multi-Indexing.

Simple Example: Temperature in a Grid¶

In this example, our data is the temperature sampled across a 2-dimensional grid. First, we need to generate the required set of indices, \((x, y)\), which point to a specific location inside the square. These coordinates can then be assigned the designated temperature values. A list of such coordinates can be a simple Series:

values = np.array([1.2, 0.8, 3.1, 0.1, 0.05, 1, 1.4, 2.1, 2.9])

coords = [('r0', 'c0'), ('r0', 'c1'), ('r0', 'c2'),

('r1', 'c0'), ('r1', 'c1'), ('r1', 'c2'),

('r2', 'c0'), ('r2', 'c1'), ('r2', 'c2')] # r is row, c is column

points = pd.Series(values, index=coords, name='temperature')

points

(r0, c0) 1.20

(r0, c1) 0.80

(r0, c2) 3.10

(r1, c0) 0.10

(r1, c1) 0.05

(r1, c2) 1.00

(r2, c0) 1.40

(r2, c1) 2.10

(r2, c2) 2.90

Name: temperature, dtype: float64

It is important we understand that this is a series because the data is one-dimensional. The actual data is contained in that rightmost column, a one-dimensional array. We do have two coordinates for each point, but the data itself, the temperature, is one-dimensional.

Currently, the index is a simple tuple of coordinates. It’s a single column, containing tuples. Pandas can help us to index this data in a more intuitive manner, using a MultiIndex object.

mindex = pd.MultiIndex.from_tuples(coords)

mindex

MultiIndex([('r0', 'c0'),

('r0', 'c1'),

('r0', 'c2'),

('r1', 'c0'),

('r1', 'c1'),

('r1', 'c2'),

('r2', 'c0'),

('r2', 'c1'),

('r2', 'c2')],

)

We received something which looks quite similar to the list of tuples we had before, but it’s a MultiIndex instance. Let’s see how it helps us by reindexing our data with it:

points = points.reindex(mindex)

points

r0 c0 1.20

c1 0.80

c2 3.10

r1 c0 0.10

c1 0.05

c2 1.00

r2 c0 1.40

c1 2.10

c2 2.90

Name: temperature, dtype: float64

This looks good. Each index level is represented by a column, with the data being the last one. The “missing” values indicate that the value in that cell is the same as the value above it.

You might have assumed that accessing the data now is much more intuitive. Let’s look at the values of all the points in the first row, r0:

points.loc['r0', :] # .loc() is label-based indexing

r0 c0 1.2

c1 0.8

c2 3.1

Name: temperature, dtype: float64

Or the values of points in the second column:

points.loc[:, 'c1']

r0 0.80

r1 0.05

r2 2.10

Name: temperature, dtype: float64

points.loc[:, :] # all values - each level of the index has its own colon (:)

r0 c0 1.20

c1 0.80

c2 3.10

r1 c0 0.10

c1 0.05

c2 1.00

r2 c0 1.40

c1 2.10

c2 2.90

Name: temperature, dtype: float64

Note that .iloc disregards the MultiIndex, treating our data as a simple one-dimensional vector (as it actually is):

points.iloc[6]

# points.iloc[0, 1] # ERRORS

1.4

Besides making the syntax cleaner, these slicing operations are as efficient as their single-dimension counterparts.

It should be clear that a MultiIndex can have more than two levels. Modelling a 3D cube (with the temperatures inside it) is as easy as:

values3d = np.array([1.2, 0.8,

3.1, 0.1,

0.05, 1,

1.4, 2.1,

2.9, 0.3,

2.4, 1.9])

# 3D coordinates with a shape of (r, c, z) = (3, 2, 2)

coords3d = [('r0', 'c0', 'z0'), ('r0', 'c0', 'z1'),

('r0', 'c1', 'z0'), ('r0', 'c1', 'z1'),

('r1', 'c0', 'z0'), ('r1', 'c0', 'z1'),

('r1', 'c1', 'z0'), ('r1', 'c1', 'z1'),

('r2', 'c0', 'z0'), ('r2', 'c0', 'z1'),

('r2', 'c1', 'z0'), ('r2', 'c1', 'z1')] # we'll soon see an easier way to create this index

cube = pd.Series(values3d, index=pd.MultiIndex.from_tuples(coords3d), name='temp_cube')

cube

r0 c0 z0 1.20

z1 0.80

c1 z0 3.10

z1 0.10

r1 c0 z0 0.05

z1 1.00

c1 z0 1.40

z1 2.10

r2 c0 z0 2.90

z1 0.30

c1 z0 2.40

z1 1.90

Name: temp_cube, dtype: float64

We can even name the individual levels, which helps with some slicing operations we’ll see below:

cube.index.names = ['x', 'y', 'z']

cube

x y z

r0 c0 z0 1.20

z1 0.80

c1 z0 3.10

z1 0.10

r1 c0 z0 0.05

z1 1.00

c1 z0 1.40

z1 2.10

r2 c0 z0 2.90

z1 0.30

c1 z0 2.40

z1 1.90

Name: temp_cube, dtype: float64

Again, you have to remember that this is one-dimensional data, with a three-dimensional index. In statistical term, we might term the indices a fixed, independent categorical variable, while the values are the dependent variable. Pandas actually has a CategoricalIndex object which you’ll meet in one of your future homework assignments (but don’t be afraid to hit the link and check it out on your own if you just can’t wait).

More on extra dimensions¶

In the previous square example, it’s very appealing to ditch the MultiIndex altogether and just work with a dataframe, or even a simple NumPy array. This is because the two indices represented rows and columns. A quick way to turn one representation into the other is the stack()\unstack() method:

points.index.names = ['rows', 'columns']

points

rows columns

r0 c0 1.20

c1 0.80

c2 3.10

r1 c0 0.10

c1 0.05

c2 1.00

r2 c0 1.40

c1 2.10

c2 2.90

Name: temperature, dtype: float64

pts_df = points.unstack()

pts_df

| columns | c0 | c1 | c2 |

|---|---|---|---|

| rows | |||

| r0 | 1.2 | 0.80 | 3.1 |

| r1 | 0.1 | 0.05 | 1.0 |

| r2 | 1.4 | 2.10 | 2.9 |

pts_df.stack() # back to a series

rows columns

r0 c0 1.20

c1 0.80

c2 3.10

r1 c0 0.10

c1 0.05

c2 1.00

r2 c0 1.40

c1 2.10

c2 2.90

dtype: float64

If we want to turn the indices into “real” columns, we can use the reset_index() method:

pts_df_reset = points.reset_index()

pts_df_reset

| rows | columns | temperature | |

|---|---|---|---|

| 0 | r0 | c0 | 1.20 |

| 1 | r0 | c1 | 0.80 |

| 2 | r0 | c2 | 3.10 |

| 3 | r1 | c0 | 0.10 |

| 4 | r1 | c1 | 0.05 |

| 5 | r1 | c2 | 1.00 |

| 6 | r2 | c0 | 1.40 |

| 7 | r2 | c1 | 2.10 |

| 8 | r2 | c2 | 2.90 |

So why bother with these (you haven’t seen nothing yet) complicated multi-indices?

As you might have guessed, adding data points, i.e. increasing the dimensionality of the data, is very easy and intuitive. Data remains aligned through addition and deletion of data. Moreover, treating these categorical variables as an index can help the mental modeling of the problem, especially when you wish to perform statistical modeling with your analysis.

Constructing a MultiIndex¶

Creating a hierarchical index can be done in several ways:

pd.MultiIndex.from_arrays([['a', 'a', 'b', 'b'], [1, 2, 1, 2]])

MultiIndex([('a', 1),

('a', 2),

('b', 1),

('b', 2)],

)

pd.MultiIndex.from_tuples([('a', 1), ('a', 2), ('b', 1), ('b', 2)])

MultiIndex([('a', 1),

('a', 2),

('b', 1),

('b', 2)],

)

pd.MultiIndex.from_product([['a', 'b'], [1, 2]]) # Cartesian product

MultiIndex([('a', 1),

('a', 2),

('b', 1),

('b', 2)],

)

The most common way to construct a MultiIndex, though, is to add to the existing index one of the columns of the dataframe. We’ll see how it’s done below.

Another important note is that with dataframes, the column and row index is symmetric. In effect this means that the columns could also contain a MultiIndex:

index = pd.MultiIndex.from_product([[2013, 2014], [1, 2]],

names=['year', 'visit'])

columns = pd.MultiIndex.from_product([['Bob', 'Guido', 'Sue'], ['HR', 'Temp']],

names=['subject', 'type'])

# mock some data

data = np.round(np.random.randn(4, 6), 1)

data[:, ::2] *= 10

data += 37

# create the DataFrame

health_data = pd.DataFrame(data, index=index, columns=columns)

health_data

| subject | Bob | Guido | Sue | ||||

|---|---|---|---|---|---|---|---|

| type | HR | Temp | HR | Temp | HR | Temp | |

| year | visit | ||||||

| 2013 | 1 | 27.0 | 36.5 | 33.0 | 38.3 | 34.0 | 36.5 |

| 2 | 31.0 | 37.1 | 44.0 | 38.4 | 55.0 | 38.5 | |

| 2014 | 1 | 28.0 | 36.1 | 40.0 | 36.9 | 30.0 | 36.6 |

| 2 | 34.0 | 35.3 | 40.0 | 38.5 | 44.0 | 37.2 | |

This sometimes might seem too much, and so usually people prefer to keep the column index as a simple list of names, moving any nestedness to the row index. This is due to the fact that usually columns represent the measured dependent variable.

index = pd.MultiIndex.from_product([[2013, 2014], [1, 2], ['Bob', 'Guido', 'Sue']],

names=['year', 'visit', 'subject'])

columns = ['HR', 'Temp']

# mock some data

data = np.round(np.random.randn(12, 2), 1)

data[:, ::2] *= 10

data += 37

# create the DataFrame

health_data_row = pd.DataFrame(data, index=index, columns=columns)

health_data_row

| HR | Temp | |||

|---|---|---|---|---|

| year | visit | subject | ||

| 2013 | 1 | Bob | 26.0 | 37.2 |

| Guido | 44.0 | 36.6 | ||

| Sue | 27.0 | 36.9 | ||

| 2 | Bob | 25.0 | 36.8 | |

| Guido | 41.0 | 38.0 | ||

| Sue | 34.0 | 36.9 | ||

| 2014 | 1 | Bob | 36.0 | 36.7 |

| Guido | 38.0 | 37.4 | ||

| Sue | 38.0 | 36.5 | ||

| 2 | Bob | 47.0 | 37.3 | |

| Guido | 29.0 | 38.0 | ||

| Sue | 38.0 | 35.9 |

Creating a MultiIndex from a data column¶

While all of the above methods work, and could be useful sometimes, the most common method of creating an index is from an existing data column.

location = ['AL', 'AL', 'NY', 'NY', 'NY', 'VA']

day = ['SUN', 'SUN', 'TUE', 'WED', 'SAT', 'SAT']

temp = [12.3, 14.1, 21.3, 20.9, 18.8, 16.5]

humidity = [31, 45, 41, 41, 49, 52]

states = pd.DataFrame(dict(location=location, day=day,

temp=temp, humidity=humidity))

states

| location | day | temp | humidity | |

|---|---|---|---|---|

| 0 | AL | SUN | 12.3 | 31 |

| 1 | AL | SUN | 14.1 | 45 |

| 2 | NY | TUE | 21.3 | 41 |

| 3 | NY | WED | 20.9 | 41 |

| 4 | NY | SAT | 18.8 | 49 |

| 5 | VA | SAT | 16.5 | 52 |

states.set_index(['day'])

| location | temp | humidity | |

|---|---|---|---|

| day | |||

| SUN | AL | 12.3 | 31 |

| SUN | AL | 14.1 | 45 |

| TUE | NY | 21.3 | 41 |

| WED | NY | 20.9 | 41 |

| SAT | NY | 18.8 | 49 |

| SAT | VA | 16.5 | 52 |

states.set_index(['day', 'location'])

| temp | humidity | ||

|---|---|---|---|

| day | location | ||

| SUN | AL | 12.3 | 31 |

| AL | 14.1 | 45 | |

| TUE | NY | 21.3 | 41 |

| WED | NY | 20.9 | 41 |

| SAT | NY | 18.8 | 49 |

| VA | 16.5 | 52 |

states.set_index(['day', 'location'], append=True)

| temp | humidity | |||

|---|---|---|---|---|

| day | location | |||

| 0 | SUN | AL | 12.3 | 31 |

| 1 | SUN | AL | 14.1 | 45 |

| 2 | TUE | NY | 21.3 | 41 |

| 3 | WED | NY | 20.9 | 41 |

| 4 | SAT | NY | 18.8 | 49 |

| 5 | SAT | VA | 16.5 | 52 |

states.set_index([['i', 'ii', 'iii', 'iv', 'v', 'vi'], 'day'])

| location | temp | humidity | ||

|---|---|---|---|---|

| day | ||||

| i | SUN | AL | 12.3 | 31 |

| ii | SUN | AL | 14.1 | 45 |

| iii | TUE | NY | 21.3 | 41 |

| iv | WED | NY | 20.9 | 41 |

| v | SAT | NY | 18.8 | 49 |

| vi | SAT | VA | 16.5 | 52 |

Indexing and Slicing a MultiIndex¶

We’ll use these dataframes as an example:

health_data

| subject | Bob | Guido | Sue | ||||

|---|---|---|---|---|---|---|---|

| type | HR | Temp | HR | Temp | HR | Temp | |

| year | visit | ||||||

| 2013 | 1 | 27.0 | 36.5 | 33.0 | 38.3 | 34.0 | 36.5 |

| 2 | 31.0 | 37.1 | 44.0 | 38.4 | 55.0 | 38.5 | |

| 2014 | 1 | 28.0 | 36.1 | 40.0 | 36.9 | 30.0 | 36.6 |

| 2 | 34.0 | 35.3 | 40.0 | 38.5 | 44.0 | 37.2 | |

health_data_row

| HR | Temp | |||

|---|---|---|---|---|

| year | visit | subject | ||

| 2013 | 1 | Bob | 26.0 | 37.2 |

| Guido | 44.0 | 36.6 | ||

| Sue | 27.0 | 36.9 | ||

| 2 | Bob | 25.0 | 36.8 | |

| Guido | 41.0 | 38.0 | ||

| Sue | 34.0 | 36.9 | ||

| 2014 | 1 | Bob | 36.0 | 36.7 |

| Guido | 38.0 | 37.4 | ||

| Sue | 38.0 | 36.5 | ||

| 2 | Bob | 47.0 | 37.3 | |

| Guido | 29.0 | 38.0 | ||

| Sue | 38.0 | 35.9 |

If all we wish to do is to examine a column, indexing is very easy. Don’t forget the dataframe as dictionary analogy:

health_data['Guido'] # works for the column MultiIndex as expected

| type | HR | Temp | |

|---|---|---|---|

| year | visit | ||

| 2013 | 1 | 33.0 | 38.3 |

| 2 | 44.0 | 38.4 | |

| 2014 | 1 | 40.0 | 36.9 |

| 2 | 40.0 | 38.5 |

health_data_row['HR'] # that's a Series!

year visit subject

2013 1 Bob 26.0

Guido 44.0

Sue 27.0

2 Bob 25.0

Guido 41.0

Sue 34.0

2014 1 Bob 36.0

Guido 38.0

Sue 38.0

2 Bob 47.0

Guido 29.0

Sue 38.0

Name: HR, dtype: float64

Accessing single elements is also pretty straight-forward:

health_data_row.loc[2013, 1, 'Guido'] # index triplet

HR 44.0

Temp 36.6

Name: (2013, 1, Guido), dtype: float64

We can even slice easily using the first MultiIndex (year in our case):

health_data_row.loc[2013:2017] # 2017 doesn't exist, but Python's slicing rules prevent an exception here

# health_data_row.loc[1] # doesn't work

| HR | Temp | |||

|---|---|---|---|---|

| year | visit | subject | ||

| 2013 | 1 | Bob | 26.0 | 37.2 |

| Guido | 44.0 | 36.6 | ||

| Sue | 27.0 | 36.9 | ||

| 2 | Bob | 25.0 | 36.8 | |

| Guido | 41.0 | 38.0 | ||

| Sue | 34.0 | 36.9 | ||

| 2014 | 1 | Bob | 36.0 | 36.7 |

| Guido | 38.0 | 37.4 | ||

| Sue | 38.0 | 36.5 | ||

| 2 | Bob | 47.0 | 37.3 | |

| Guido | 29.0 | 38.0 | ||

| Sue | 38.0 | 35.9 |

Slicing is a bit more difficult when we want to take into account all available indices. This is due to the possible conflicts between the different indices and the columns.

Assuming we want to look at all the years, with all the visits, only by Bob - we would want to write something like this:

health_data_row.loc[(:, :, 'Bob'), :] # doesn't work

File "<ipython-input-80-55ad5b04417a>", line 1

health_data_row.loc[(:, :, 'Bob'), :] # doesn't work

^

SyntaxError: invalid syntax

This pickle is solved in two possible ways:

First option is the slice object:

bobs_data = (slice(None), slice(None), 'Bob') # all years, all visits, of Bob

health_data_row.loc[bobs_data, 'HR']

# arr[slice(None), 1] is the same as arr[:, 1]

year visit subject

2013 1 Bob 26.0

2 Bob 25.0

2014 1 Bob 36.0

2 Bob 47.0

Name: HR, dtype: float64

row_idx = (slice(None), slice(None), slice('Bob', 'Guido')) # all years, all visits, Bob + Guido

health_data_row.loc[row_idx, 'HR']

year visit subject

2013 1 Bob 26.0

Guido 44.0

2 Bob 25.0

Guido 41.0

2014 1 Bob 36.0

Guido 38.0

2 Bob 47.0

Guido 29.0

Name: HR, dtype: float64

Another option is the IndexSlice object:

idx = pd.IndexSlice

health_data_row.loc[idx[:, :, 'Bob'], :] # very close to the naive implementation

| HR | Temp | |||

|---|---|---|---|---|

| year | visit | subject | ||

| 2013 | 1 | Bob | 26.0 | 37.2 |

| 2 | Bob | 25.0 | 36.8 | |

| 2014 | 1 | Bob | 36.0 | 36.7 |

| 2 | Bob | 47.0 | 37.3 |

idx2 = pd.IndexSlice

health_data_row.loc[idx2[2013:2015, 1, 'Bob':'Guido'], 'Temp']

year visit subject

2013 1 Bob 37.2

Guido 36.6

2014 1 Bob 36.7

Guido 37.4

Name: Temp, dtype: float64

Finally, there’s one more way to index into a MultiIndex which is very straight-forward and explicit; the cross-section.

health_data_row.xs(key=(2013, 1), level=('year', 'visit'))

| HR | Temp | |

|---|---|---|

| subject | ||

| Bob | 26.0 | 37.2 |

| Guido | 44.0 | 36.6 |

| Sue | 27.0 | 36.9 |

Small caveat: unsorted indices¶

Having an unsorted index in your MultiIndex might make the interpreter pop a few exceptions at you:

# char index in unsorted

index = pd.MultiIndex.from_product([['a', 'c', 'b'], [1, 2]])

data = pd.Series(np.random.rand(6), index=index)

data.index.names = ['char', 'int']

data

char int

a 1 0.401202

2 0.473541

c 1 0.722017

2 0.528039

b 1 0.244234

2 0.562101

dtype: float64

data['a':'b']

---------------------------------------------------------------------------

UnsortedIndexError Traceback (most recent call last)

<ipython-input-87-0e6568674d17> in <module>

----> 1 data['a':'b']

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/series.py in __getitem__(self, key)

875 return self._get_values(key)

876

--> 877 return self._get_with(key)

878

879 def _get_with(self, key):

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/series.py in _get_with(self, key)

882 # _convert_slice_indexer to determine if this slice is positional

883 # or label based, and if the latter, convert to positional

--> 884 slobj = self.index._convert_slice_indexer(key, kind="getitem")

885 return self._slice(slobj)

886 elif isinstance(key, ABCDataFrame):

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/indexes/base.py in _convert_slice_indexer(self, key, kind)

3388 indexer = key

3389 else:

-> 3390 indexer = self.slice_indexer(start, stop, step, kind=kind)

3391

3392 return indexer

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/indexes/base.py in slice_indexer(self, start, end, step, kind)

5275 slice(1, 3, None)

5276 """

-> 5277 start_slice, end_slice = self.slice_locs(start, end, step=step, kind=kind)

5278

5279 # return a slice

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/indexes/multi.py in slice_locs(self, start, end, step, kind)

2745 # This function adds nothing to its parent implementation (the magic

2746 # happens in get_slice_bound method), but it adds meaningful doc.

-> 2747 return super().slice_locs(start, end, step, kind=kind)

2748

2749 def _partial_tup_index(self, tup, side="left"):

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/indexes/base.py in slice_locs(self, start, end, step, kind)

5474 start_slice = None

5475 if start is not None:

-> 5476 start_slice = self.get_slice_bound(start, "left", kind)

5477 if start_slice is None:

5478 start_slice = 0

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/indexes/multi.py in get_slice_bound(self, label, side, kind)

2689 if not isinstance(label, tuple):

2690 label = (label,)

-> 2691 return self._partial_tup_index(label, side=side)

2692

2693 def slice_locs(self, start=None, end=None, step=None, kind=None):

/opt/hostedtoolcache/Python/3.8.9/x64/lib/python3.8/site-packages/pandas/core/indexes/multi.py in _partial_tup_index(self, tup, side)

2749 def _partial_tup_index(self, tup, side="left"):

2750 if len(tup) > self.lexsort_depth:

-> 2751 raise UnsortedIndexError(

2752 f"Key length ({len(tup)}) was greater than MultiIndex lexsort depth "

2753 f"({self.lexsort_depth})"

UnsortedIndexError: 'Key length (1) was greater than MultiIndex lexsort depth (0)'

lexsort means “lexicography-sorted”, or sorted by either number or letter. Sorting an index is done with the sort_index() method:

data.sort_index(inplace=True)

print(data)

print(data['a':'b']) # now it works

char int

a 1 0.401202

2 0.473541

b 1 0.244234

2 0.562101

c 1 0.722017

2 0.528039

dtype: float64

char int

a 1 0.401202

2 0.473541

b 1 0.244234

2 0.562101

dtype: float64

Data Aggregation¶

Data aggregation using a MultiIndex is super simple:

states

| location | day | temp | humidity | |

|---|---|---|---|---|

| 0 | AL | SUN | 12.3 | 31 |

| 1 | AL | SUN | 14.1 | 45 |

| 2 | NY | TUE | 21.3 | 41 |

| 3 | NY | WED | 20.9 | 41 |

| 4 | NY | SAT | 18.8 | 49 |

| 5 | VA | SAT | 16.5 | 52 |

states.set_index(['location', 'day'], inplace=True)

states

| temp | humidity | ||

|---|---|---|---|

| location | day | ||

| AL | SUN | 12.3 | 31 |

| SUN | 14.1 | 45 | |

| NY | TUE | 21.3 | 41 |

| WED | 20.9 | 41 | |

| SAT | 18.8 | 49 | |

| VA | SAT | 16.5 | 52 |

states.mean(level='location')

| temp | humidity | |

|---|---|---|

| location | ||

| AL | 13.200000 | 38.000000 |

| NY | 20.333333 | 43.666667 |

| VA | 16.500000 | 52.000000 |

states.median(level='day')

| temp | humidity | |

|---|---|---|

| day | ||

| SUN | 13.20 | 38.0 |

| TUE | 21.30 | 41.0 |

| WED | 20.90 | 41.0 |

| SAT | 17.65 | 50.5 |

Exercise: Replacing Values

Hint

When we wish to replace values in a Series or a DataFrame, two main options come to mind:

A boolean mask (e.g.

df[mask] = "new value").The

replace()method.

In the following exercise try and explore the second method, which provides powerful custom replacement options.

Create a (10, 2) dataframe with increasing integer values 0-9 in both columns.

Solution

data = np.tile(np.arange(10), (2, 1)).T

df = pd.DataFrame(data)

Use the

.replace()method to replace the value 3 in the first column with 99.

Solution

df.replace({0: 3}, {0: 99})

Use it to replace 3 in column 0, and 1 in column 2, with 99.

Solution

df.replace({0: 3, 1: 1}, 99)

Use its

methodkeyword to replace values in the range [3, 6) of the first column with 6.

Solution

df[0].replace(np.arange(3, 6), method='bfill')

MultiIndex Construction and Indexing

Construct a

MultiIndexwith three levels composed from the product of the following lists:['a', b', 'c', 'd']['i', 'ii', 'iii']['x', 'y', 'z']

Solution

letters = ['a', 'b', 'c', 'd']

roman = ['i', 'ii', 'iii']

coordinates = ['x', 'y', 'z']

index = pd.MultiIndex.from_product((letters, roman, coordinates))

Instantiate a dataframe with the created index and populate it with random values in two columns.

Solution

size = len(letters) * len(roman) * len(coordinates)

data = np.random.randint(20, size=(size, 2))

df = pd.DataFrame(data, columns=['today', 'tomorrow'], index=index)

Use two different methods to extract only the values with an index of

('a', 'ii', 'z').

Solution

Option #1:

df.loc['a', 'ii', 'z']

Option #2:

df.xs(key=('a', 'ii', 'z'))

Option #3:

idx = pd.IndexSlice

df.loc[idx['a', 'ii', 'z'], :]

Slice in two ways the values with an index of

'x'.

Solution

Option #1:

idx = pd.IndexSlice

df.loc[idx[:, :, 'x'], :]

Option #2:

df.xs(key='x', level=2)

Option #3:

df.loc[(slice(None), slice(None), 'x'), :]

n-Dimensional Containers¶

While technically a dataframe is a two-dimensional container, in the next lesson we’ll see why it can perform quite efficiently as a pseudo n-dimensional container.

If you wish to have true n-dimensional DataFrame-like data structures, you should use the xarray package and its xr.DataArray and xr.Dataset objects, which we’ll discuss in the next lessons.